How Much Money Can You Save From $62 Billion Overspend In Cloud Infrastructure

This year, there has been an acceleration of migration to the cloud and this trend is expected to continue into 2025. Both public and private cloud expenditure have accelerated. However, research suggests companies overspend in the cloud by approximately $62 billion each year. Some have suggested the cost savings in the cloud aren’t real; however, this is far from the truth. How can one tap into one’s share of this $62 billion overspent in the cloud and allocate this capital towards other functions where money can produce measurable returns?

Companies looking to moving infrastructure to the cloud, use cost savings calculators that show proven savings over 16%. These savings in the cloud are realized by most companies that are fueling the adoption of the cloud at a higher level. With a handful of cloud providers – Amazon, Azure, Google, IBM, each has standardized the visibility as to its utilization metrics, which in the past represented flashing lights in a dark, cold room – the data center. Access to this metric of actual utilization across resources in a standardized fashion has provided this visibility.

Infrastructure Usage Forecasting Science

To appreciate how this $62 billion excess has come about one needs to understand the science of provisioning infrastructure. There is a forecasting science used in lifting and shifting infrastructure workloads to the cloud, developed in the last two decades of server technology and infrastructure. Two underlying assumptions that have crept in to this science is the provisioning science a) the limited nature of infrastructure resources and b) the time to obtain budgets and procure infrastructure can range from one to three years. A third paradigm is the way enterprises allocate infrastructure budgets, leads to this over-provisioning, as budgets progressively increased year on year. Infrastructure budgets shrink when unused dollars come in to the budget review cycles.

The cloud is a game changer in both these underlying assumptions. One, available capacity of infrastructure in the cloud is near infinite and procuring has been replaced with a concept of provisioning. With infrastructure becoming like software code, the relevance is in provisioning takes seconds to a minute. Provisioning of a specific type of computing infrastructure in the cloud just required a simple one-line command that executes in under a minute. Imagine in the former traditional data center world; the equivalent is like moving a 50-pound server from a pickup truck onto a server rack then adding power or opening up a server and adding or removing memory/storage, etc.

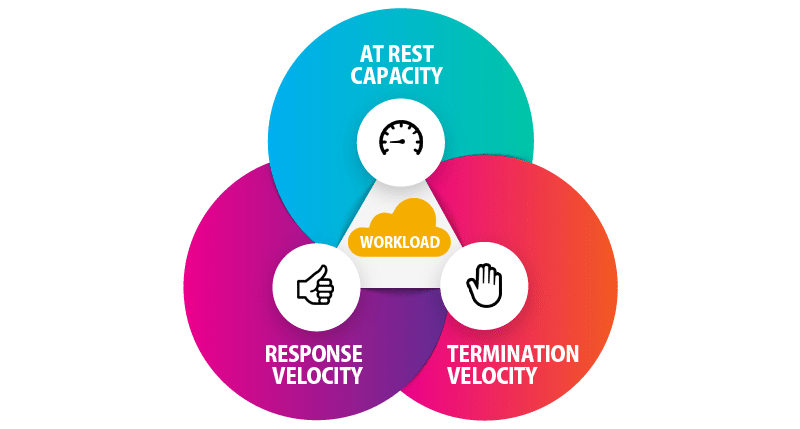

Capacity planning in the cloud requires a new thought process with new metrics in light of technological advancements. Advancements in cloud provisioning make it easy to allocate cost down to a specific application or even granular levels thanks to improvements in virtualization technologies. Just as one wouldn’t expect an employee who puts a 10% effort into his or her work, why would you expect an infrastructure resource that does 10%, to receive a 100% payment? Yet, this is what occurs in the cloud daily leading up to the $62 billion overspend. The new paradigm of optimizing infrastructure in the cloud has these three metrics – a) at rest capacity b) response velocity c) termination velocity

Fixed cost or Variable Budgets

The other significant business dynamic is to analyze these budgeting processes if infrastructure spending has to be a fixed cost line item or variable with a metric of performance? Unfortunately, the business practice of having a fixed budget line item has led to this practice of provisioning and retaining excess capacity that likely will never get used, at least at the levels was hard-working individuals in an organization that work countless extra hours, operating at 110% of expectation. What is the actual percentage utilization of a resource in the cloud? Would you believe if most cloud computing resources are running somewhere between 10-30%? Cost of these infrastructures in larger enterprises can be the size of a large team of people.

Another factor behind this is the CAPEX to OPEX switch and how cloud vendors have taken advantage of these company budgeting methods to give discounts for capacity reservation. Vendor offerings on cloud capacity management and cost optimization count an underutilized resource as something that tracks less than 10% usage for four consecutive days. If you start asking for cost savings above your spend, the cost savings come from what is called a reservation. Its an advance payment for “reserved capacity” and those companies used to provisioning infrastructure with depreciation type expenses understand this well. It is also essential to know how various tools measure well-utilized vs. underutilized cloud resource.

Just as employees could be fired from an organization for underperformance, similarly infrastructure associated with applications that don’t perform can be pruned! The pruning in the infrastructure space can be similar to reducing the number of hours of a contract resource – i.e., instead of 40 hours per week, it could be 20 hours per week or less. This kind of arrangement can be made without all the legal complexities if you aren’t on a fixed cost (similar to a permanent employee, where reduced hours may not relate to a reduced payment due to contractual obligations).

Technology Savings

The most prominent area of cost savings is in the virtualization technology, or to oversimplify – a type of server or computer with specific characteristics or features. There are many features of these virtualization technologies where workloads can or cannot use. By selecting the right kind of virtualization technology that one’s workload can use now, one can discover cost savings.

Another area of cost savings is in the space of storage, where there are variations of storage types with various levels of redundancy and recoverability. Choosing the right storage types can lead to cost savings in the cloud. Another area is in configuration and setup intricacies.

Until recently the analysis of cost savings was a complex skill that required specialized engineers spend countless hours of expert analysis of multiple computer-generated logs. In the past standardization of these cloud logs along with business intelligence type, tools made it possible to generate savings. However, nowadays with artificial intelligence technologies (AI), these logs can be accessed in seconds, expert decision making applied in seconds, and the exact amount in the cloud can be discovered, reported in few minutes.

Have you read?

Best Hospitality And Hotel Management Schools In The World, 2017.

Best Fashion Schools In The World For 2017.

CEOWORLD Magazine Rankings.

RANKED: The 100 Best Universities In The World; CWUR Rankings 2017.

The 100 Most Influential People In History.

Add CEOWORLD magazine to your Google News feed.

Follow CEOWORLD magazine headlines on: Google News, LinkedIn, Twitter, and Facebook.

Copyright 2024 The CEOWORLD magazine. All rights reserved. This material (and any extract from it) must not be copied, redistributed or placed on any website, without CEOWORLD magazine' prior written consent. For media queries, please contact: info@ceoworld.biz